In the first of a series on DDR, the author looks at bus concept basics.

The DDR bus is one of the widest commercial high-speed busses, if not the widest. Designing the bus comes with a set of challenges that can be intimidating to designers new to the bus. Fundamentally though, the bus is quite simple, with just a few significant concepts to keep in mind. Understanding the building blocks of the bus will go a long way in knowing the important design requirements of the bus.

The bus consists of a single controller on one end of the bus, and a set of one or more memory DRAM chips on the other end. Generally speaking, the greater the memory capacity requirement of the system, the greater number of DRAMs needed. The two major operations conducted by the controller are to either write data to the DRAMs or read data from the DRAMs.

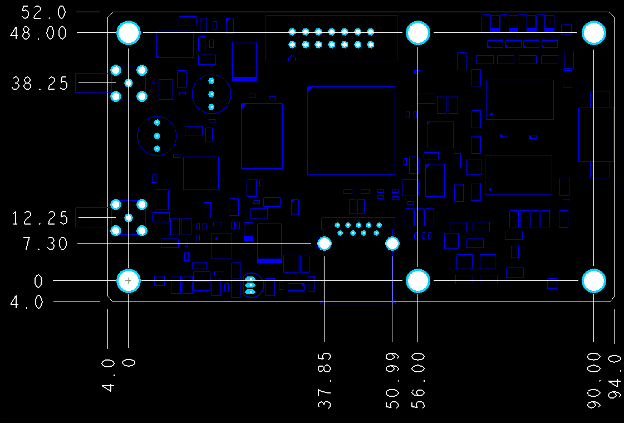

How DDR works. These operations transpire using two sets of signals: the data bus and the address/command bus, illustrated in FIGURE 1.

Figure 1. DDR uses two sets of signals, like most memory.

The bidirectional data bus is broken up into lanes. Each lane consists of a unique DQS (strobe) signal, and the associated data signals. Lanes commonly consist of eight data bits. However, DRAM parts with just four data bit signals also exist, so there might be some lanes consisting of just four data bits. Regardless of the number of bits in a lane, each data bit is latched in during the transition of the DQS. The data signals are all single-ended. The DQS signals for DDR3 and DDR4 are always differential. For DDR2, the DQS signal may either be single-ended or differential.

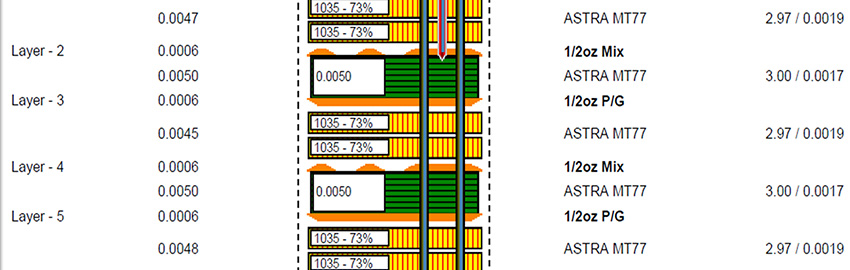

During write transactions, the controller launches the signal approximately halfway between two DQS transitions (FIGURE 2). This way, during the actual DQS transition, the data signal should be stable. Subsequently, the DRAM latches in the data during a DQS transition. This is the first crucial element of the design: The input setup and hold time requirements at the DRAM need to be met. Since the controller launches the data signals approximately halfway between two DQS transitions in order to center the DQS around the stable data, care needs to be taken during layout that the propagation delays for the DQS and the data signals of a given lane are not very different from each other. The propagation delays across lanes are a bit more flexible since the strobe is only used for the data bits within the lane in consideration.

Figure 2. For writes, the controller launches the signal approximately halfway between two DQS transitions.

During read transactions, the DRAM launches the data signals approximately in line with the DQS (FIGURE 3). It is then the controller’s job to delay the data and/or the strobe appropriately in order to latch in the data by using the DQS.

Figure 3. During read transactions, unlike writes, the data signals approximately line up with the DQS.

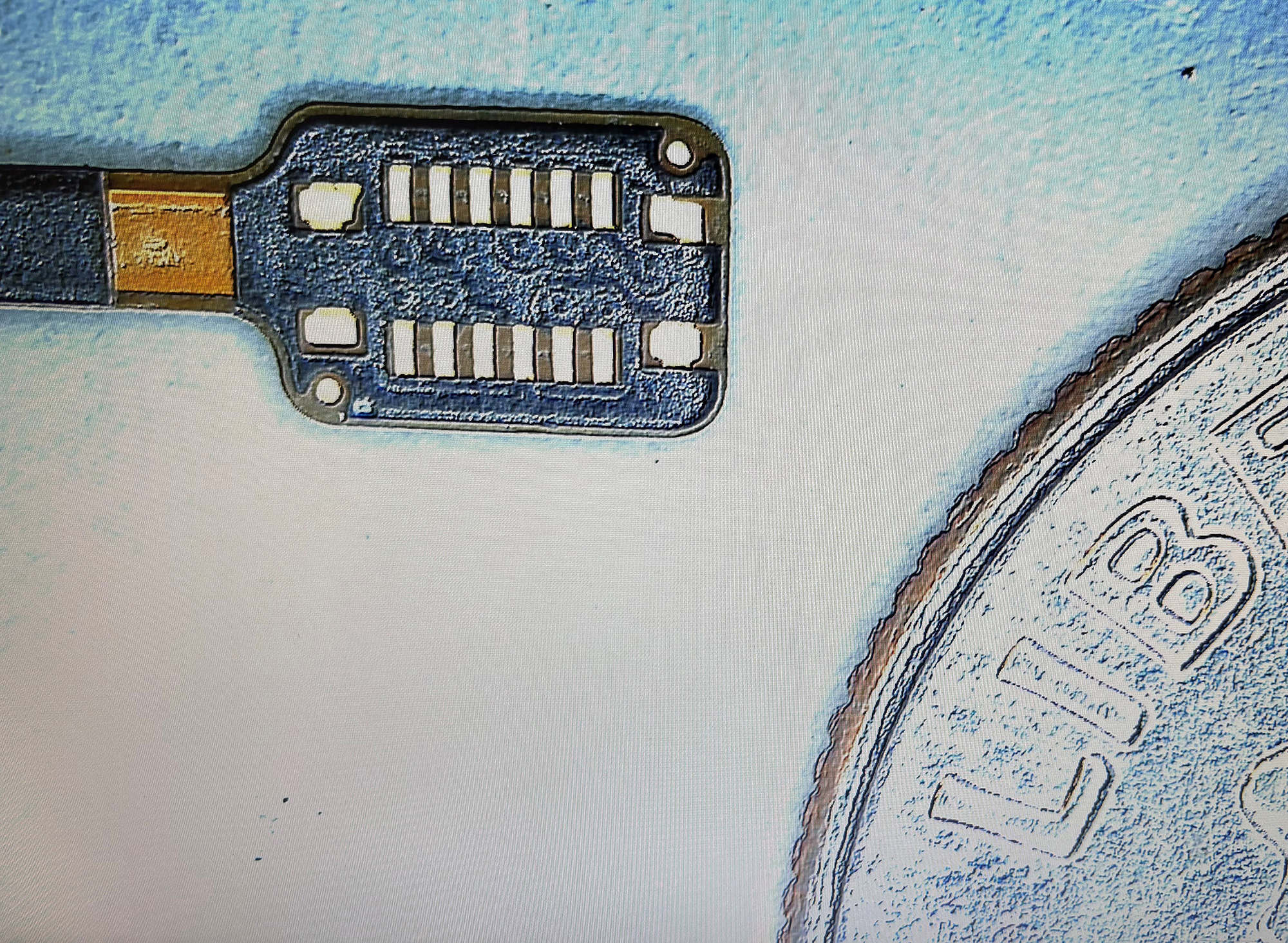

Each lane, in a one rank system, only accesses one DRAM. In a multi-rank system, a given lane (the data bits and the strobe) can be connected to multiple DRAMs. During a transaction, only one of the DRAMs connected to a lane will be accessed. To be active, this DRAM will be specified by asserting that DRAM’s Chip Select signal; the other DRAMs sharing that lane will have their Chip Select signals deactivated. In general, the number of “ranks” in a system is equal to the number of Chip Selects being used in the system, which will equal the number of DRAMs sharing a given lane.

The address/command bus consists of several address bits (the exact number depending on the size of the DRAMs that need to be accessed), bits to specify the command being issued to the DRAM, and finally the clock. The bus is unidirectional; commands are sent only from the controller to the DRAMs.

The address and command signals are single-ended signals. The clock, however, is differential, and is measured at the point when the + and – sides of the clock cross each other.

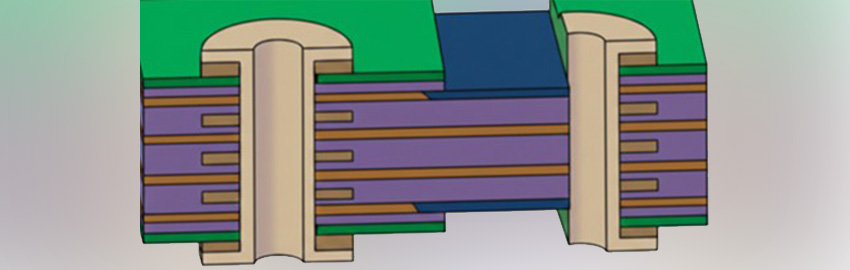

To specify a command, the appropriate command and address are launched by the controller approximately when the clock signal is going low (FIGURE 4).

The signals stay stable through the clock going high, and then transition to the new command during the subsequent low-going of the clock. This way, the command is stable during the high-going transition of the clock.

Figure 4. For commands, the command and address are launched by the controller approximately at the time the clock signal is going low.

This leads to the second important consideration during design: The setup and hold time requirement for the address/command signals need to be met at the DRAM. Because the signals are sent from the controller in such a way as to ensure stable address/command bits during the clock’s rising edge, care must be taken so as to delay the address/command signals by an amount equal to the clock. Since the address/command bus is shared across different DRAM chips, the delay (including all SI effects) from the controller to each DRAM must be the same for all the address bits, as well as for the clock. So even though the delay across DRAMs may vary, the delay for the address and the clock must line up at any given DRAM.

Finally, there is a requirement at the DRAM between the DQS and CLK. This requirement ties in the data and address busses. The DQS and CLK need to approximately line up at each DRAM. To ensure this, DDR2 required the delays from the controller to every DRAM for each of the DQS signals be equal to one another, and overall be equal to the delay from the controller to the DRAM for the clock.

Fly-by routing. In DDR3, a new concept called fly-by routing was introduced. This permitted the address and clock to start from the controller and touch each DRAM in sequence. This, however, implies the clock reaches each of the DRAMs at different times. If the DQS signals are made out to similar lengths, then there might be no guarantee the DQS/CLK requirement would be met.

In this case, the controller must be able to internally delay the DQS signals enough to permit the DQS signal to reach the CLK at approximately the same times.

Note that for DDR2, and for controllers that don’t support the fly-by topology, the clock signals and DQS signals need to match each other.

Next, I will go into more practical topics when we look at high-speed PCB designs and simulation.

Nitin Bhagwath is technical marketing engineer at Mentor Graphics (mentor.com); This email address is being protected from spambots. You need JavaScript enabled to view it..